We’re used to Meta copying everything new for its own purposes. That’s how we got Reels and more than a few other features besides. But Make-A-Video, a video-centric spinoff of the AI-generated images that are so hot right now, is a cloned technology we were not expecting to see.

And yet, here we are. Meta head Mark Zuckerberg introduced the technology in a Facebook post earlier this week. It’s very similar to other AI-powered image generation in that it works off of a text prompt. But apparently, it’s harder to create videos than static images.

Dall-E but make it video

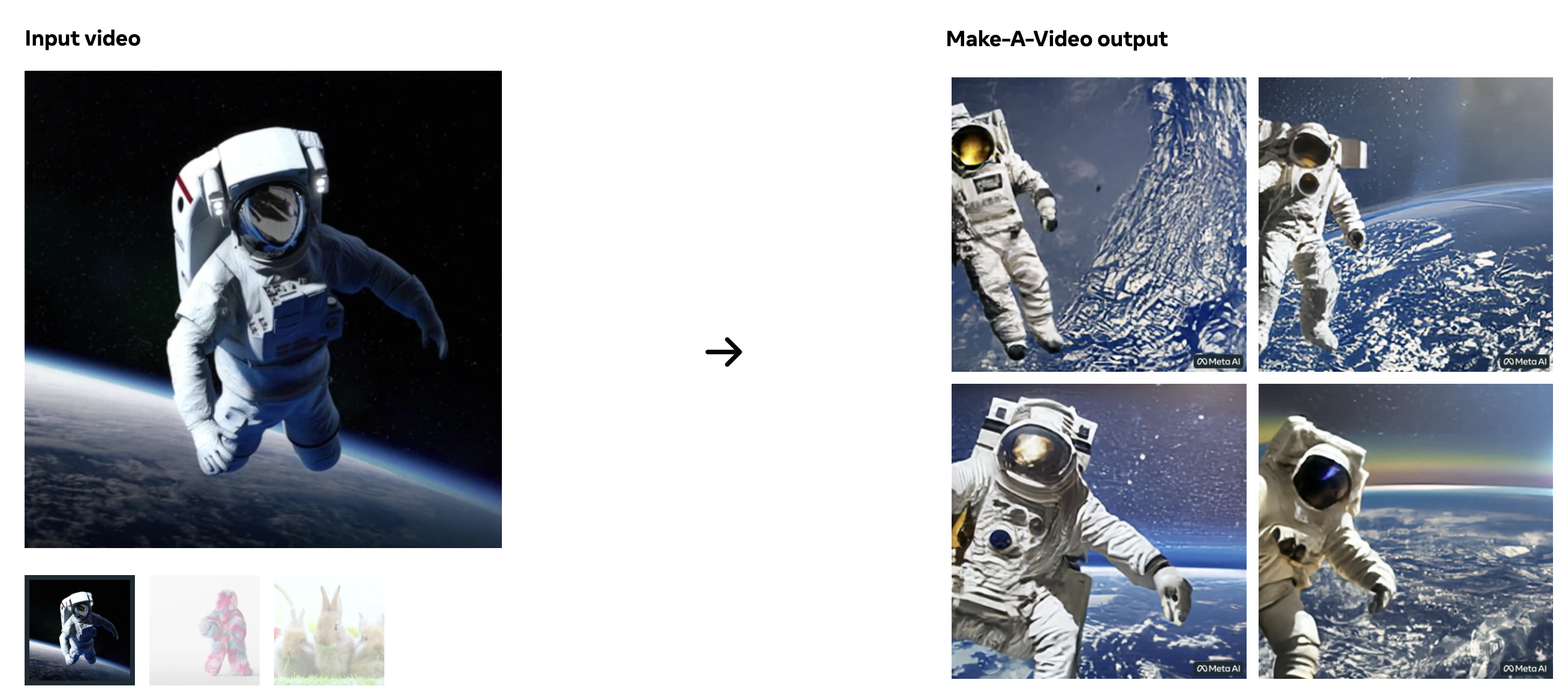

The AI system isn’t just matching up text prompts with existing videos that are slightly tweaked. It’s able to work from a text prompt, a single image, or a pair of them, and extrapolated how the objects being described in the scene should move. The weird thing is that Make-A-Video more or less taught itself.

Zuckerberg explained that it’s “…much harder to generate video than photos because beyond correctly generating each pixel, the system also has to predict how they’ll change over time.”

“Make-A-Video solves this by adding a layer of unsupervised learning that enables the system to understand motion in the physical world and apply it to traditional text-to-image generation.”

Read More: Koe Recast is an AI tool that turns anyone’s voice into something else entirely

You might be asking yourself, ‘What’s the point?’ Or perhaps you’re asking, ‘Why don’t they come up with an AI that chooses good names for new technology?’ Those are very valid queries. Make-A-Video is being used to train AI systems, but mostly it’s getting Meta some new attention. Everyone’s talking about AI-generated art, so going a step further to AI-generated video content is logical.

You can’t try out Meta’s generation engine for yourself. Yet. There’s a plan afoot to let Make-A-Video out in the world as a demo. We’ll see what horrific slices of nightmare fuel the general public are able to create then.