If social media was a person, you’d probably avoid them.

Facebook, Twitter and Instagram are loaded with pictures of people going to exotic places, looking like they are about to be on the cover of Vogue, and otherwise living a fairy-tale existence. And, like all fairy tales, these narratives feel a lot like fiction.

When you compare the “projected reality” to your lived experience, it would be easy to conclude that you do not measure up. Research shows that young adults are especially vulnerable to this phenomenon.

We have also studied this trend in graduate students, our next generation of scholars: they too, implicitly compare themselves to their peers, sometimes automatically. We’re socially trained to do this as shown by a litany of research studies exploring our relationships with other’s projected images.

These implicit comparisons can threaten your innate psychological needs: autonomy, competence and relatedness. Not just one of them. ALL OF THEM. And such comparisons have shifted life online towards an unwinnable competition.

We are outnumbered and out-posted by other people and it can make us feel unequivocally terrible if we let it. It’s never been easier to be insecure about ourselves and our achievements thanks to the ever-present torrent of “updates” posted by mostly well-meaning people seeking opportunities for connection and validation.

Where did this come from?

Social media fills our days, but it hasn’t always. In fact, the birth of sites and apps like the micro-blogging platform Tumblr (2007), the bite-sized conversation builder Twitter (2006) and star-studded Instagram (2010) all arrived on the technology scene in tandem with the e-book revolution. And yet, in just over a decade, these tools have exploded across our browsers, into our phones and onto our self-perceptions.

People appear to be spending an hour a day on various social media apps, which doesn’t sound too rough if we assume everyone is only using one app. However, the tendency for younger users to embrace multiple social media apps (and to access their accounts multiple times a day) is increasing.

What that means for many of us is that we are spending hours each day connected and consuming content, from short tweets to beautifully staged #bookstagram images to painstakingly crafted selfies that sometimes make it seem like our friends are living the glamorous life, even when they’re waking up before dawn to take care of their little ones.

https://www.instagram.com/p/Bstn-T9FozD/

Social media presences are not inherently fake, but some people interacting in these spaces feel pressure to perform. And that’s not always bad!

As argued by Amy Cuddy, sometimes it’s helpful to pretend we are who we want to be in order to give ourselves the confidence to grow into our futures. There’s a rich history to “acting as if” in spiritual and growth-oriented spaces. But there’s a line between “fake it till you become it” and spending the afternoon shooting awkward photos to gain more “likes.”

Dark point of the soul

After conducting about 60 interviews and 2,500 surveys across two ongoing studies of post-secondary students, the findings indicate that being constantly compared to other people can demolish our confidence quickly.

For example, one first-year PhD student told us: “I feel like a failure because I don’t have any papers out and I haven’t won a major scholarship like the rest of my lab group.” A first-year student?!

Another commented: “All my peers are better than me, why am I even here?”

These are high-performing thinkers, and yet their confidence is being steamrolled in part because social media does not facilitate fair comparisons.

We wish these experiences were unique to certain contexts, but they are ubiquitous. We’ve become so used to seeing the world through social media that we give it false equivalencewith our lived experience. We implicitly compare our lives against the sensation of social media and consider it a fair contention.

Of course, the mundane doesn’t measure up to social media. Social media posts need to be epic to be shared.

Hardly anyone posts a “meh” status update; our social media posts are typically at one extreme or another, good or bad, and we are left to compare our individual realities with an exceptional anecdote devoid of context. It’s all of the sugar, with none of the fibre.

It’s not all a pit of despair

Despite this relatively grim picture, the way we’re performing on social media isn’t entirely destructive. For starters, the awareness that we all seem to have about the inauthentic presentations of people’s lives that we consume online (and the painful comparisons that often follow) has also spawned subversively creative acts of satire.

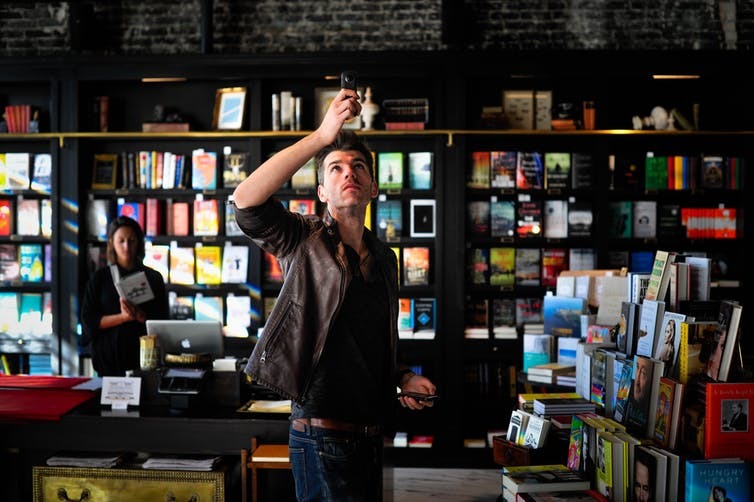

One example comes from “It’s Like They Know Us,” a blog/book/parenting subculture that’s built around taking stock images of families and providing captions that poke fun of the impossible standards these images perpetuate. And articles like the recent “How to Become Instagram Famous Experiment” remind us all that behind the carefully cultivated images rests a series of failed attempts and sometimes ridiculous efforts to capture the perfect shot.

There’s a perverse kind of creativity that our image-saturated web presence has spawned. And as often as we fall into the destructive cycle of comparing our messy, authentic lives to the snapshots of perfection that we see online, we just as often step back and laugh at how silly it all is.

Perhaps we’re merely playing along; isn’t it fun to think, just for a moment, that somewhere out there, someone is really living their best life? And maybe, just maybe, if we arrange our books in an artful composition or capture a stunning selfie on the 10th attempt, maybe we will be able to see the beauty that exists in each of our imperfectly messy, chaotic, authentic realities beyond the picture.

Maybe it’s good for us to “act as if,” as long as we remember that the content we share and engage with online is only a fraction of our real stories. Remember, even fairy tales have a grain of truth.

- is a PhD Candidate in Education, Queen’s University, Ontario

- is PhD Student in Education, Queen’s University, Queen’s University, Ontario

- This article first appeared on The Conversation