With the emergence of advanced AI systems, the way social science research is conducted could change. Social sciences have historically relied on traditional research methods to gain a better understanding of individuals, groups, cultures and their dynamics.

Large language models are becoming increasingly capable of imitating human-like responses. As my colleagues and I describe in a recent Science article, this opens up opportunities to test theories on a larger scale and with much greater speed.

But our article also raises questions about how AI can be harnessed for social science research while also ensuring transparency and replicability.

Using AI in research

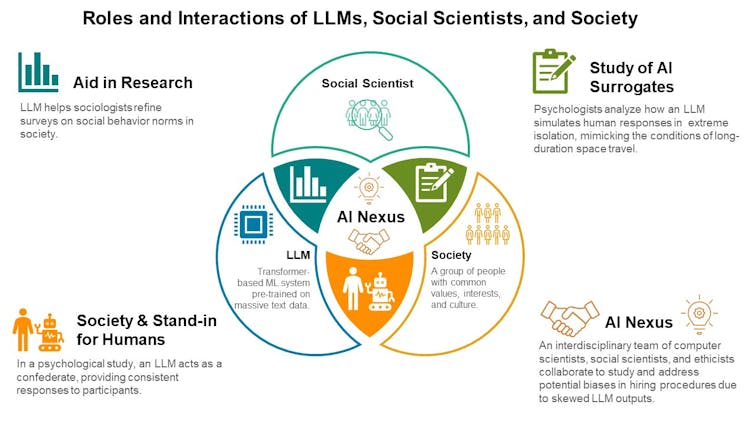

There are a number of possible ways AI could be used in social science research. First, unlike human researchers, AI systems can work non-stop, providing real-time interpretations of our fast-paced, global society.

AI could act as a research assistant by processing enormous volumes of human conversations from the internet and offering insights into societal trends and human behaviour.

Another possibility could be using AI as actors in social experiments. A sociologist could use large language models to simulate social interactions between people to explore how specific characteristics, like political leanings, ethnic background or gender influence subsequent interactions.

Most provocatively, large language models could serve as substitutes for human participants in the initial phase of data collection.

For example, a social scientist could use AI to test ideas for interventions to improve decision-making. This is how it would work: first, scientists would ask AI to simulate a target population group. Next, scientists would examine how a participant from this group would react in a decision-making scenario. Scientists would then use insights from the simulation to test the most promising interventions.

Obstacles lie ahead

While the potential for a fundamental shift in social science research is profound, so are the obstacles that lie ahead.

First, the narrative about existential threats from AI could pose as an obstacle. Some experts are warning that AI has the potential to bring about a dystopian future, like the infamous Skynet from the Terminator franchise where sentient machines result in humanity’s downfall.

These warnings might be somewhat misguided, or at the very least, premature. Historically, experts have shown a poor track-record when it comes to predicting societal change.

Read More: If AI image generators are so smart, why do they struggle to write and count?

Present-day AI is not sentient; it’s an intricate mathematical model trained to recognize patterns in data and make predictions. Despite the human-like appearance of responses from models such as ChatGPT, these large language models are not human stand-ins.

Large language models are trained on a vast number of cultural products including books, social media texts and YouTube replies. At best, they represent our collective wisdom rather than being an intelligent individual agent.

The immediate risks posed by AI are less about sentient rebellion and more about mundane issues that are nonetheless significant.

Bias is a major concern

A primary concern lies in the quality and breadth of the data that trains AI models, including large language models.

If AI is trained primarily on data from a specific demographic — like English-speaking individuals from North America, for example — its insights will reflect these inherent biases.

This bias reproduction is a major concern because it could amplify the very disparities social scientists strive to uncover in their research. It’s imperative to promote representational fairness in the data used to train AI models.

But such fairness can only be achieved with transparency and access to information about data AI models are trained on. So far, such information for all commercial models is a mystery.

By appropriately training these models, social scientists will be able to more precisely simulate human behavioural responses in their research.

AI literacy is key

The threat of misinformation is another substantial challenge. AI systems sometimes generate hallucinated facts — statements that sound credible, but are incorrect. Since generative AI lacks awareness, it presents these hallucinations without any indication of uncertainty.

People may be more likely to seek such confident-sounding information, favouring it over less definite, but more accurate information. This dynamic could inadvertently spread false information, misleading researchers and the public alike.

Moreover, while AI opens up research opportunities for hobbyist researchers, it could inadvertently fuel confirmation bias if users only seek information that aligns with their pre-existing beliefs.

The importance of AI literacy can’t be overstressed. Social scientists must educate users on how to handle AI tools and critically assess their outputs.

Striking a balance

As we forge ahead, we must grapple with the very real challenges AI presents, from bias reproduction to misinformation and potential misuse. Our focus should not be on preventing a far-off Skynet scenario, but on the concrete issues that AI brings to the table now.

As we continue to explore the transformative potential of AI in the social sciences, we must remember AI is neither our enemy, nor our saviour — it’s a tool. Its value lies in how we use it. It has a potential to enrich our collective wisdom, but it can equally foster human folly.

By striking a balance between leveraging AI’s potential and managing its tangible challenges, we can guide the integration of AI into social sciences responsibly, ethically, and to the benefit of all.

- is a Professor of Psychology, University of Waterloo

- This article first appeared on The Conversation